The Era of Agentic Search Engines

Traditional search engines are increasingly being replaced by Agentic AI and Large Language Models (LLMs) like Perplexity and SearchGPT. These systems are even less forgiving of technical flaws such as duplicate content, spider traps, or chaotic URL structures caused by filter systems than traditional crawlers. A clean faceted navigation is no longer just a question of Crawl Budget, but the absolute prerequisite for AI agents to accurately grasp and recommend a shop's semantic structure and product portfolio.

- 1. The Dilemma of Faceted Navigation

- 2. The Core Problem: Crawl Budget Waste & Duplicate Content

- 3. Technical Solutions for Filter Management

- 4. The PRG Pattern: The Absolute Gold Standard

- 5. Link Attributes & Dofollow/Nofollow Strategies

- 6. Roadmap: Shop Audit & Implementation

- 7. Best Practices for URL Structure in E-Commerce

- 8. Frequently Asked Questions (Glossary)

1. The Dilemma of Faceted Navigation

To succeed in e-commerce, you must offer your customers a perfect User Experience (UX). A central element of this experience is the so-called Faceted Navigation (or simply "filter system"). Customers expect to be able to filter thousands of products with a few clicks by attributes like color, size, price, brand, material, or availability. From a UX perspective, this is an absolute must and significantly drives up the conversion rate.

From a Search Engine Optimization (SEO) perspective, however, faceted navigation is one of the most dangerous mechanisms you can implement in a web project. Why? Because of the so-called combinatorial explosion. If a shop owner isn't extremely careful and doesn't plan the architecture precisely, a seemingly harmless filter system transforms a shop with 10,000 products into a monster with millions of indexable URLs. Search engine crawlers like Googlebot get lost in these endless parameter combinations, leading to massive indexing issues, severe duplicate content, and ultimately a dramatic drop in search rankings.

In this comprehensive guide for Technical SEO 2.0, we deeply analyze how to master this challenge. We examine the mechanisms behind crawl budgets, evaluate the effectiveness of classic strategies like `rel="canonical"` and `noindex`, and introduce advanced architectural patterns like the PRG Pattern (Post-Redirect-Get). The goal is to equip you with the tools to structure massive shop systems so that they are perfectly optimized for both human shoppers and modern Agentic AI crawlers.

2. The Core Problem: Crawl Budget Waste & Duplicate Content

What is Crawl Budget?

The Crawl Budget is the maximum number of URLs a search engine like Google is willing to crawl on your website within a specific timeframe. This budget is not infinite. It is based on two main factors:

If your shop generates millions of URL combinations through filters, the crawler exhausts its budget analyzing worthless filter pages (e.g., "Red shoes, size 42, brand X, price $10-20"). The budget is depleted before the crawler even reaches your new, important, high-margin products or fresh blog articles. Valuable pages remain de-indexed or outdated.

Spider Traps and the Combinatorial Explosion

A Spider Trap occurs when a system generates an infinite number of structural URLs. A practical example: Suppose a category page has 5 filter dimensions (brand, color, size, material, price), and each dimension has 10 options. If the navigation allows arbitrary combinations and the order of URL parameters is variable, mathematically millions of unique URLs are created – all showing almost the exact same content.

This phenomenon inevitably leads to Duplicate Content and, in the worst case, to keyword cannibalization, where Google no longer knows which URL is relevant for the keyword "red shoes", resulting in a ranking downgrade for all URLs.

The Nightmare: 1,000,000 URLs

A shop without technical SEO for filters generates URLs exponentially.

Crawl Budget Waste: 95%Crawlers spend 95% of their time on irrelevant parameter URLs. Important products are indexed weeks after launch. Server costs explode due to bot traffic.

The Ideal Case: Clean Index

A shop with strict index management and PRG pattern.

Indexed URLs: ~15,000Only the true category pages, SEO landing pages, and products are accessible. The crawl budget is sufficient for daily updates of the entire catalog. Top rankings.

3. Technical Solutions for Filter Management

To get a grip on the faceted navigation problem, there are various technical approaches. No single solution is perfect for all use cases, which is why a combination is often most effective. The three traditional main tools of an SEO specialist are the Canonical Tag, the meta robots tag (`noindex`), and the `robots.txt` file.

The most common and safest method to keep filter pages out of the index. The tag signals: "Do not index this page, but follow the links on it to other products." Caution: Over time, Google treats long-standing "noindex" pages as "noindex, nofollow" and stops following the links entirely.

This points from a filter page (e.g., `/category?color=red`) to the main category (`/category`). It signals: "This is just a copy, pass all ranking signals to the original." The downside: It's only a 'hint' for Google. If the content differs significantly due to filtering, it's often ignored. Plus, it saves NO crawl budget because Google still has to crawl the page to read the tag!

By using `Disallow: /*?filter=*` in robots.txt, you strictly forbid the crawler from accessing parameter URLs. This instantly saves 100% of the crawl budget for these paths. Risk: Google cannot crawl links on these blocked pages. If important products are only accessible via filter paths, they won't be indexed (Orphan Pages).

When to use Canonical vs. Noindex?

The debate between Canonical and Noindex is a classic in Technical SEO. As a rule of thumb in e-commerce for 2026: If a filter combination is relevant to users but offers no significant, independent value for search engines (e.g., "Shoes, Red, Size 42, Sort: Price ascending"), it should be marked with `noindex, nofollow` (or `noindex, follow` with the aforementioned caveats). Better yet, don't make these links accessible to crawlers at all (see PRG pattern in the next chapter).

The Canonical Tag should be reserved for slightly altered URLs that show absolutely identical content, such as tracking parameters (`?utm_source=...`) or session IDs. For true faceted navigation, the canonical tag alone is too weak and continues to waste massive crawl budget.

Code example for a correct Canonical implementation in Astro/Next.js:

<head>

<!-- On the page: /category/sneakers?color=red&sort=price -->

<link rel="canonical" href="https://www.shop.com/category/sneakers" />

<meta name="robots" content="noindex, follow" />

</head>This combination (Canonical + Noindex) was long considered "belt and suspenders" but is recommended by many experts today as robust insurance in case the search engine ignores one of the signals. Ultimately, however, neither Canonical nor Noindex solves the problem of wasted server resources during crawling.

4. The PRG Pattern: The Ultimate Solution

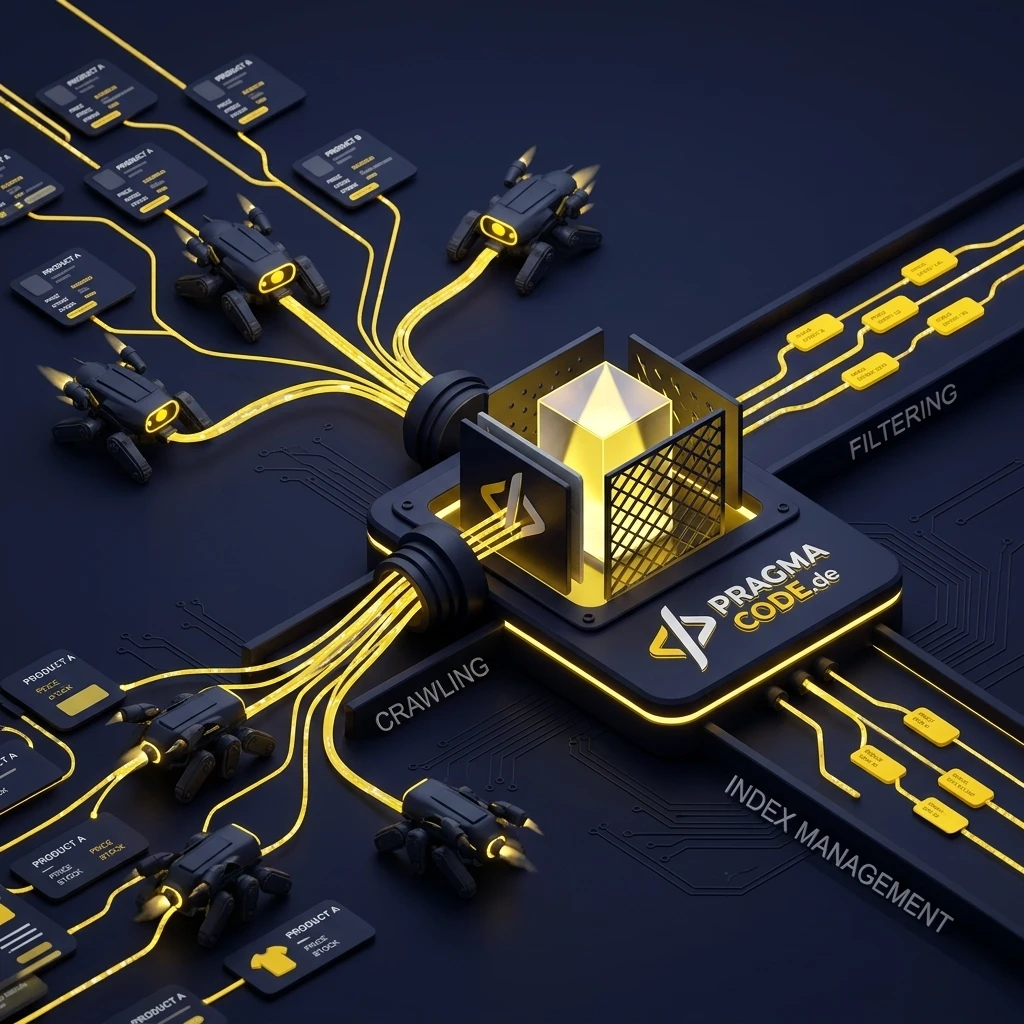

To permanently and elegantly solve the crawl budget problem of faceted navigation, modern enterprise shops rely on the PRG Pattern (Post-Redirect-Get). This architecture was originally developed to prevent duplicate form submissions (that annoying "Do you want to resubmit the form?"). In an SEO context, it's a brilliant method to make filter links invisible to crawlers without compromising user-friendliness.

How the PRG Pattern Works

Unlike standard `<a href="...">` links that a crawler mercilessly follows, filters in the PRG pattern are submitted as HTML forms using the `POST` method.

POST Request

The user clicks a filter (e.g., checkbox "Red"). The frontend sends a POST request to the server. Search engine crawlers never submit POST forms, as this could change the server state. To the bot, the link essentially doesn't exist.

REDIRECT

The server receives the POST request, calculates the new filter URL (e.g., /category?color=red), and responds with an HTTP Status Code 302 or 303 (See Other) Redirect to this new URL.

GET Request

The user's browser follows the redirect and executes a normal GET request on the new filter URL. The filtered products are displayed.

The gigantic SEO advantage: Since search engines don't execute POST requests, they don't even see the millions of filter combinations. There are no links in the source code for them to follow. The crawl budget is 100% preserved, and no duplicate content is generated. At the same time, the system works smoothly for the human user – and is even accessible, as native form elements are used.

In modern JavaScript frameworks like React, Vue, or Next.js, the PRG pattern is often no longer strictly resolved via server-side HTTP redirects, but via client-side routing (e.g., router.push()) coupled with <button> elements instead of <a> tags. Since Googlebot generally does not click buttons without an href attribute, this achieves the same SEO effect. This is referred to as the "virtual PRG pattern".

5. Link Attributes & Dofollow/Nofollow Strategies

If a complete refactoring of the shop architecture to the PRG pattern is too expensive or technically not (yet) feasible, many SEOs turn to HTML link attributes to steer the crawler. The most well-known attribute for this is `rel="nofollow"`.

rel="nofollow" The Filter Blocker

Appended to links the crawler should not follow (e.g., sorting links "Price ascending").

rel="dofollow" (Default) SEO Landing Pages

Used for filters intended to rank as standalone keyword landing pages (e.g., "Red Shoes").

The problem with `nofollow` in 2026: Google now views `nofollow` merely as a "hint", not a strict directive. This means Google can decide to follow the link anyway if its algorithms deem it useful. Nevertheless, it remains a strong signal.

Another frequently discussed topic is so-called "PageRank Sculpting". In the past, it was thought that applying `nofollow` to filter links would funnel "link juice" (PageRank energy) towards important product links. This theory is long outdated. The energy of a nofollow link simply evaporates; it is not redistributed to other links. Therefore, while nofollow is useful for crawl budget, it's no magic bullet for better rankings.

6. Roadmap: Shop Audit & Implementation

The theoretical concepts are clear. But how do you actually proceed when analyzing and repairing an existing, organically grown e-commerce shop with acute indexing problems? Here is the professional workflow for a technical SEO audit of faceted navigation.

Logfile Analysis & Status Quo

Download the server log files (Apache, Nginx, Varnish) for the last 30 days. Analyze them with tools like Screaming Frog Log File Analyser or Splunk. Search for the user-agent "Googlebot". Identify which parameter URLs are crawled most frequently. Often, you will find tens of thousands of hits on obscure filter combinations while your top products are ignored.

Identification of SEO-relevant Facets

Not all filters are SEO poison. Some filter combinations have high search volume and should exist as independent landing pages. For example: The "Shoes" category filtered by color "Red" and brand "Nike" serves the search query "Nike red shoes". These facets must remain indexable, receive their own semantic URLs (Clean URLs), and feature optimized H1 headings. All other combinations (e.g., price $20-$30, size 42) are irrelevant for SEO.

Implementation of Blocking Mechanisms

For all irrelevant filters: Set the meta tag to noindex and ensure the canonical tag points to the parent category. For radical crawl budget savings, block unimportant parameter patterns in the robots.txt. Evaluate whether the PRG Pattern can be implemented by your development agency.

Monitoring and Re-Evaluation via Search Console

Strictly monitor the "Page Indexing" report in Google Search Console. The number of URLs under "Crawled - currently not indexed" and "Excluded by 'noindex' tag" should increase massively, while indexing errors for important product pages must decrease dramatically. Watch the indexing status over the coming weeks.

7. Best Practices for URL Structure in E-Commerce

The way URLs are generated in your shop often dictates the success of your SEO strategy. Search engines prefer clean, understandable hierarchies. Avoid endless query parameter chains. Compare these approaches:

https://www.shop.com/catalog?category=123&brand=nike&color=red&sort=price_asc https://www.shop.com/shoes/nike/red/ https://www.shop.com/shoes/nike/red/?sort=price_asc&size=42 where size=42 runs in the PRG pattern or is noindex

In the hybrid approach, SEO-relevant dimensions (category, brand, main color) are integrated into the URL path (so-called "Clean URLs"). These pages are indexable, have optimized meta tags, and unique texts. All volatile, temporary, or user-specific filters (size, sorting, price range) are appended as query parameters (?). These parameter URLs are then strictly kept out of the index via `noindex` and `robots.txt`, or ideally hidden using the PRG pattern.

This architectural separation between "content" (path) and "view/state" (parameters) is the secret to successful e-commerce SEO strategies used by market leaders like Zalando, AboutYou, or Otto.

In summary: Technical SEO in e-commerce is not a one-time project, but a continuous technical discipline. Mastering Faceted Navigation requires close collaboration between SEO specialists, Data Analysts, and Software Architects. Those who invest in modern approaches like the PRG pattern and strict index management lay the foundation for massive organic scaling – securing a decisive competitive advantage in the era of Agentic AI and Generative Search.

Do you have questions about your shop architecture?

Book your free strategy call now8. Frequently Asked Questions (Glossary)

What is Faceted Navigation?

Faceted Navigation is a user interface pattern that allows users to narrow down results by applying multiple filters (facets) like color, size, or brand simultaneously. It is essential for E-commerce UX, but risky for SEO.

What is Crawl Budget?

Crawl Budget refers to the number of URLs a search engine bot (like Googlebot) can and wants to crawl on a website within a certain timeframe.

What is the Canonical Tag?

The Canonical Tag (rel="canonical") is an HTML element that tells search engines which URL is the "main version" of a page to avoid duplicate content.

What is the PRG Pattern?

The Post-Redirect-Get (PRG) Pattern is a web development pattern. In SEO, it is used to submit filter links as POST forms, making them invisible to search engines.

What is a Spider Trap?

A Spider Trap is a structural error on a website that causes crawlers to get caught in an endless loop of dynamically generated URLs, wasting massive amounts of crawl budget.

Latest Insights & Articles

High-Performance WooCommerce

How to optimize loading times and perfect Core Web Vitals for your shop.

Read More →

E-Commerce Trends 2026

The future of online retail: Headless, Agentic AI and Composable Commerce.

Read More →More technical terms?

Visit our central Glossary Hub for all IT terminology from A to Z.