Introduction: The End of the Waiting Line

In an increasingly digital world, customer expectations for service have risen radically. No one wants to be stuck in endless phone queues or wait days for a response to a simple email inquiry anymore. For small and medium-sized enterprises (SMEs) and startups, this poses an immense challenge: How can excellent, ideally 24/7 available 1st-level support be guaranteed without personnel costs exploding?

The answer lies in intelligent automation through AI chatbots and modern voice agents. What used to be reserved for large corporations is now accessible, scalable, and economically viable for SMEs thanks to open APIs (like those from OpenAI, Anthropic, or specialized European providers) and flexible architectures. This article highlights the strategic, technical, and economic dimensions of implementing AI in customer service and shows why now is the right time to take the step into an automated future.

The Evolution of Customer Service: From IVR Menus to Fluent Conversation

Do you remember the days of "Press 1 for sales, 2 for..."? These IVR (Interactive Voice Response) systems were the first attempt to channel inquiries. But they were rigid, frustrating, and not very customer-oriented. The next generation was rule-based chatbots, which often failed at the simplest deviating formulations. "I'm sorry, I didn't understand your question" was the most common response.

Today we stand at a turning point. Large Language Models (LLMs) have revolutionized the ability of machines to understand natural human language in context. Combined with advanced speech synthesis (Text-to-Speech) and speech recognition (Speech-to-Text), voice agents are emerging that can conduct fluent, natural, and above all, goal-oriented dialogues. The era of frustrating bot interactions is finally over. A modern AI agent understands irony, complex nested sentences, and can even derive emotions from the caller's voice.

Use Cases: Where AI Agents Shine in the SME Sector

Not every customer contact should be automated. The key to success lies in identifying the right use cases for 1st-level support. AI agents are particularly strong here:

An AI voice agent knows no closing time and no time zones. Inquiries received at night or on weekends are processed immediately in real-time.

While a human team is overloaded during unexpected inquiry peaks (e.g., after a newsletter dispatch or during a major malfunction), a cloud-based AI solution scales effortlessly.

The bot queries customer numbers, the exact concern, and error messages before—if necessary—transferring perfectly prepared to a human 2nd-level agent.

From appointment booking and password resets to simple address changes: AI systems can interact directly via API call with the backend (CRM/ERP) and complete processes end-to-end.

Deeper Insight: The Voice Agent as an Empathetic Listener

While text chatbots are now widespread, the integration of voice agents represents the next major leap. Technologies like OpenAI's Advanced Voice Mode have demonstrated how important latency and intonation are for a natural conversational experience. A voice agent can be interrupted in real time (barge-in), reacts to delays in the flow of speech, and adapts its own speaking speed to the user.

This is particularly relevant for target groups who avoid text-based chat or prefer direct, audible contact for urgent problems (e.g., IT emergency, malfunction in the e-commerce shop). The barrier to using a chatbot is drastically lowered by natural voice input. A "zero-UI" experience emerges—the user interface is pure conversation.

Technical Architecture: How to Build an Enterprise-Ready AI Agent?

An intelligent agent consists of much more than just an API call to ChatGPT. For professional use in the SME sector, a robust, secure, and data-protection-compliant architecture is required. At Pragma Code, we rely on modern best practices to ensure exactly that.

The Large Language Model (LLM) as the Core Engine

The heart is the model that handles speech processing. We orchestrate specialized models (like GPT-5.5, Claude 3.5 Sonnet, or locally hosted open-source models like Llama 3 for maximum data sovereignty), depending on the use case and data protection requirements.

Retrieval-Augmented Generation (RAG)

LLMs hallucinate when they have to guess facts. RAG connects the model to your specific corporate database (FAQs, manuals, wiki). The agent first "reads" your documents before answering. This guarantees reliable, fact-based output.

Voice Pipes (STT & TTS)

For voice agents, we need extremely fast Speech-to-Text (STT, e.g., Whisper) and Text-to-Speech (TTS, e.g., ElevenLabs or OpenAI Audio API) components. The total latency (voice-in to voice-out) must obligatorily be under 1000 milliseconds to appear natural.

Backend Integration & Tool Calling

An agent that only talks is nice. An agent that acts is valuable. Via secure API endpoints (e.g., in microservices via Node.js or directly connected to n8n), the agent triggers actions in the HubSpot CRM, in the Shopware backend, or in the Jira ticketing system.

Data Protection and Compliance: Myths vs. Reality

Many SMEs hesitate to use AI out of concern for data protection (GDPR). These concerns are justified, but completely solvable technically. During implementation by Pragma Code, we ensure that clear boundaries are drawn. We use enterprise APIs where the providers (e.g., Microsoft Azure, OpenAI) contractually guarantee that the transmitted customer data is not used to train their global foundation models.

For highly sensitive areas (finance, medical, legal), we rely on fully locally hosted "on-premise" LLMs. Here, not a single data packet leaves the internal company network or the European data center. The combination of privacy-friendly architecture and clearly defined deletion routines makes AI customer service 100% GDPR-compliant today.

"The true value of AI in customer service lies not in replacing human employees, but in freeing them from repetitive standard tasks so they can dedicate themselves to complex, empathy-demanding problems."

Roadmap for Implementation: In 5 Steps to Intelligent Support

The introduction of an AI chat or voice agent should be structured. A hasty start without a clean data foundation inevitably leads to frustrating customer experiences.

-

Step 1: Scope & Use-Case Definition

Analysis of the most common customer inquiries (Pareto principle: Which 20% of questions cause 80% of the effort?). Definition of the systems (e.g., Zendesk, Shopify) the agent needs to dock into.

-

Step 2: Knowledge Base Preparation

Preparation of corporate data for the RAG system. Unstructured data (PDFs, Word documents) are cleaned, divided into smaller chunks, and prepared as a vector database.

-

Step 3: Prototyping & System Prompting

Development of the core agent, definition of the persona (tone, politeness), and the "guardrails" (what must the bot *under no circumstances* say or do?). Initial tests in a closed sandbox environment.

-

Step 4: Integration into the Ecosystem (Tool Calling)

Connection to backend systems. The bot learns to actively create tickets, retrieve delivery status from the ERP, or book calendar appointments. Development of specific fallback routines (human handoff).

-

Step 5: Soft Launch & Iterative Fine-Tuning

Rollout to a small test user group. Continuous monitoring of transcripts, identification of "edge cases," and fine-tuning of prompts and the RAG database for maximum accuracy.

The Human in the Loop: The Human Handoff Process

The most important function of any automated system is the ability to recognize its own limits. A perfectly designed AI agent notices when the user becomes frustrated, when the problem is too complex, or when sensitive topics are broached.

In this case, the system initiates a seamless "human handoff." This means that the chat or call is handed over to a human employee. The highlight: The human agent immediately receives a summary of the conversation thus far generated by the LLM, including identified emotion and solved sub-steps. The customer does not have to explain their problem again, and the support employee can immediately dive in solution-oriented.

Profitability: ROI and Business Value

The investment in a tailor-made AI agent usually amortizes itself within 6 to 12 months for companies with high support volumes. The cost advantages do not primarily result from personnel savings, but rather from the enormous increase in efficiency. Employees in 1st-level are no longer busy manually resetting passwords or changing addresses, but can advance to 2nd-level and take care of value-adding tasks, such as up-selling or clarifying specific complaints.

Cost Reduction for Basic Inquiries

The cost per interaction (Cost per Contact) drops from typically 5-10 Euros (human channel) to a few cents in the automated AI channel.

Revenue Increase Through Availability

Customers who receive fast help on weekends (e.g., in e-commerce during the checkout process) are less likely to abandon the purchase.

Increased Employee Satisfaction

Fluctuation in call centers and support departments drops when monotonous work is automated and employees take on more qualified tasks.

Conclusion: From Reactive Support to a Proactive Service Experience

AI chatbots and voice agents are far more than just a technological hype. They are fundamental tools to sustainably scale 1st-level support, drastically improve service quality, and secure competitive advantages in the SME sector.

At Pragma Code, we accompany you from the initial use-case discovery to the implementation of robust, secure, and GDPR-compliant agents. We do not build "off-the-shelf" products, but integrate AI deeply into your existing systems to generate real business value. The future of customer service talks to you, understands you, and acts for you. Be ready for it.

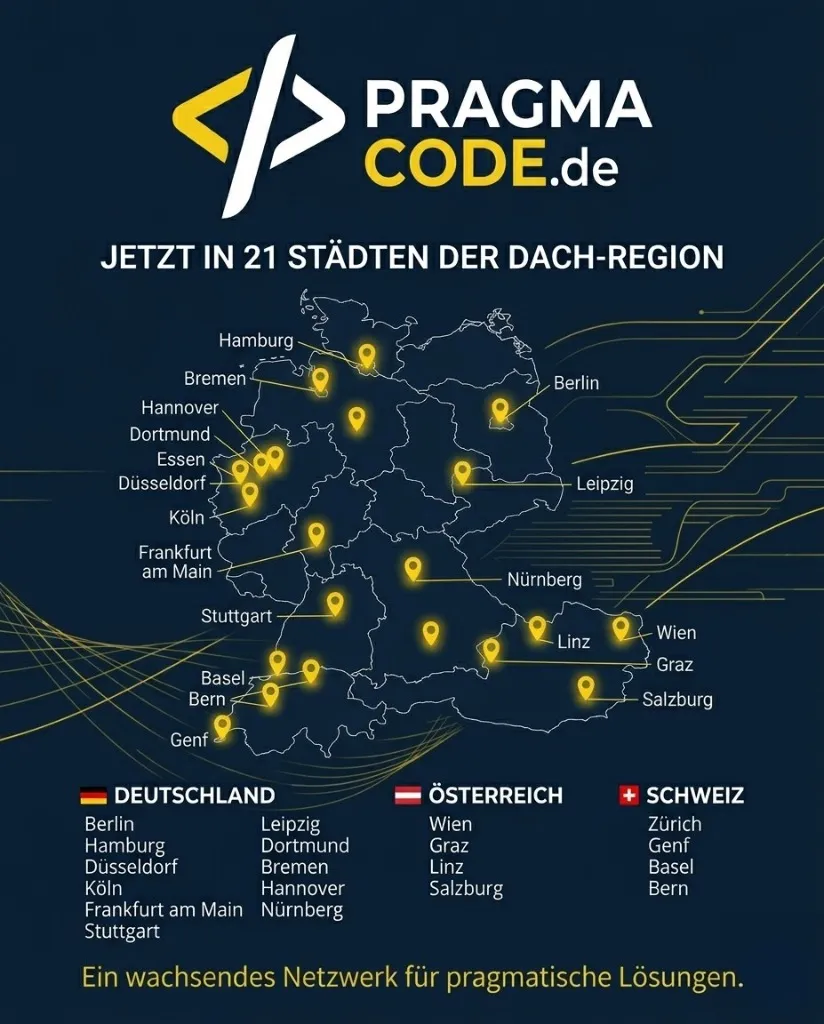

Our Regional Expertise

We are your digital partner – regionally anchored and successfully scaling across borders.

Have a vision?

Let's check together how we can make your ideas for automated support take flight.

Book your free strategy call nowExtended Specialized Glossary

LLM (Large Language Model)

An AI model trained on massive amounts of text to generate context-aware, human-like responses.

RAG (Retrieval-Augmented Generation)

A technique where the LLM is enriched with external, specific knowledge bases to make hallucination-free, precise statements.

Voice Agent

An AI system that analyzes spoken language in real-time (Speech-to-Text), generates a response, and speaks it with a natural voice.

Barge-in

The ability of an automated voice system to recognize when the human speaker interrupts it, to immediately pause and listen.

Human Handoff

The seamless transfer process from a bot to a real human support employee during an ongoing conversation.