Introduction: The New Age of Digital Warfare

The year is 2026. The digital landscape has changed more dramatically in the last five years than in the two decades before. While companies, governments, and individuals are diving deeper and deeper into the connected world, an equally rapid evolution is lurking in the shadows: that of cybercrime. It is no longer the lonely hacker in a hoodie sitting in a dark basement trying to guess passwords. This image is a thing of the past, just like the idea that a simple firewall and a virus scanner would be enough to protect a company. Today, we face highly organized syndicates that use state-of-the-art technologies to achieve their goals. These criminal organizations operate like multinational corporations, with research departments, HR teams, and a clear return on investment (ROI). Their weapon of choice? Artificial Intelligence.

But just as there is poison, there is an antidote. The future of IT security no longer lies solely in static firewalls or signature-based virus scanners. It lies in intelligent, learning systems – AI-supported defense mechanisms that detect attacks before they can cause damage. In this detailed article, we will delve deep into the subject matter. We will examine the technical, psychological, and strategic aspects of this new era. Why is AI not just a buzzword, but an absolute necessity for survival? How do these systems work in detail? And what must managing directors and IT managers do now so they aren't not in tomorrow's headlines?

The relevance of this topic cannot be underestimated. Today, a single successful attack can mean not only financial ruin but can also irrevocably destroy the trust of customers and partners. In a time when data is the new gold, the vault that protects it is the most important asset of any company. And this vault must be intelligent. It must breathe, learn, and anticipate. Welcome to the future of cyber defense.

Dieser Artikel ist ein vertiefender Fachbeitrag aus unserem Content-Cluster. Entdecken Sie die vollständige Übersicht auf unserer Hauptseite: →

Chapter 1: The Explosive Evolution of the Threat Landscape

From Virus to Autonomous Attacker

Simplistic Viruses

Code patterns (signatures) were static and easy to detect. Manual distribution via email.

Polymorphic Malware

Malware changes its code with every infection. First waves of Ransomware-as-a-Service.

Autonomous Attackers

AI-powered bots scan vulnerabilities in real-time. Deepfakes and adaptive social engineering.

To understand the need for AI-supported security, we must first look at the opposing side. The evolution of malware is frightening. In the 90s, we had to deal with viruses like "ILOVEYOU" – destructive but simplistic. They spread via email attachments to all contacts. Detection was easy: you looked for the specific code pattern (signature).

Today, we have to deal with polymorphic and metamorphic malware that changes its code with every infection to evade detection. But even that is yesterday's news. The new generation of cyberattacks is adaptive and autonomous. Criminal actors use machine learning (ML) to automatically scan and exploit vulnerabilities in systems.

Zero-Day Exploits and the Speed of Attack

Another critical element is time. It often takes only minutes between the discovery of a security vulnerability (zero-day) and the first attack. Traditional patch management cycles that take weeks or months don't stand a chance here. Attackers use AI-supported fuzzing tools to test software for vulnerabilities millions of times faster than human testers could. They find the loopholes before the manufacturers can close them.

Once an attack is in the network, every second counts. Modern ransomware encrypts thousands of files in a few minutes. A human Security Operations Center (SOC) takes an average of 15 to 60 minutes to view, evaluate, and react to an alarm. During this time, the network has long been lost. The reaction must occur at machine speed – i.e., in the millisecond range. Only an AI can do that.

Chapter 2: Why Traditional Security Systems Fail

Traditional Security

- Signature-based: Only detects known threats.

- Static: Rigid rules (firewalls), no context.

- Reactive: Only acts after damage is done.

- Overwhelming: Too many manual alerts for humans.

AI-Powered Defense

- Anomaly Detection: Finds unknown zero-day attacks.

- Adaptive: Learns user behavior and understands context.

- Proactive: Anticipates attacks before they launch.

- Autonomous: Reacts in milliseconds (SOAR).

For a long time, companies relied on the classic "castle wall strategy" (perimeter security): a strong outward-facing firewall and antivirus software on the end devices. Inside the castle walls, everyone was trusted. This model has become obsolete in the age of cloud computing, home office, and IoT (Internet of Things).

Chapter 3: AI as a Game Changer on the Defensive

This is where Artificial Intelligence enters the stage of defense. If the attackers use AI, the defenders must do so too. It is a "machine against machine" battle, where humans retain strategic oversight.

Technology Deep Dive: How does AI security work?

Excursion: Supervised vs. Unsupervised Learning

Two types of machine learning are primarily used in IT security:

- Supervised Learning: The AI is trained with huge datasets of "benign" and "malicious" code. It learns to recognize features of malware (e.g., certain API calls). This is highly effective against variants of known attacks.

- Unsupervised Learning: Here the AI knows no division into "good" or "evil." It simply analyzes the data stream in the network and learns what is "normal" (baseline). If behavior deviates from this (anomaly), it sounds the alarm. This is crucial for zero-day attacks and insider threats.

Pattern Recognition and Anomaly Detection

The greatest strength of AI and machine learning in IT security is granular anomaly detection. An AI system learns the "normal state" of every user and device in the network. It knows what normal data traffic looks like, which employees work when, which applications communicate how. Every deviation from this normal state, however subtle it may be, is recognized immediately.

Example: A printer that suddenly starts sending data streams to an unknown server on the internet. To a firewall, this might look like normal outbound traffic (port 80/443). To an AI, it is highly suspicious, as printers normally don't show this communication pattern. This is how IoT devices, which are often poorly secured, are monitored.

Predictive Security: Foreseeing Attacks

Predictive security goes one step further. By analyzing global threat data, trends on the darknet, and patterns from millions of attacks worldwide, an AI can calculate probabilities. It can predict which systems are most likely to be attacked and where security vulnerabilities could arise even before they are actively exploited. This allows proactive action instead of reactive "firefighting." Companies can strengthen their defense walls before the enemy even attacks.

Automated Response (SOAR)

Detection is good, action is better. Security Orchestration, Automation, and Response (SOAR) is an area where AI shines. When an attack is detected, the system can autonomously initiate countermeasures: isolate the affected computer from the network, lock user accounts, stop malicious processes, adjust firewall rules. And all this in milliseconds, 24/7, without an administrator having to be rung out of bed at night. This drastically minimizes "dwell time" (the time an attacker spends unnoticed in the system) and limits the damage. This is particularly crucial with ransomware, where every second decides over thousands of encrypted files.

Chapter 4: Application Examples and Case Studies

Case Study 1: Thwarted CEO Fraud

Scenario: Perfectly imitated email from the CEO requests a million-dollar transfer.

AI Success: Natural Language Processing (NLP) detected minimal semantic deviations in the writing style. The email was isolated before the accountant could react.

Case Study 2: Ransomware Stop in 200ms

Scenario: Employee unknowingly activates a ransomware dropper.

AI Success: The EDR solution recognized the anomalous process call immediately. Within milliseconds, the computer was isolated – no data loss.

Case Study 3: Insider Threat Detection

Scenario: An employee secretly copies research data before leaving.

AI Success: UEBA systems noticed access to unusual folders and correlation with job portal visits. The data theft was stopped.

Chapter 5: The Human Factor in an AI World

Does all this mean we no longer need IT security experts? That humans are becoming superfluous? Quite the opposite. The role of humans is changing, but it is becoming more important than ever. AI is a tool, not a replacement.

AI as Co-Pilot, not Autopilot

Security analysts are not replaced, but relieved. The AI takes over the Sisyphean task: scouring terabytes of log data, sorting out false positives (false alarms). Thousands of alarms come into an average SOC every day. People suffer from "alert fatigue" – they get tired and overlook important warnings. The AI pre-filters, prioritizes, and prepares the data. This gives experts the freedom to concentrate on the truly complex cases, make strategic decisions, and monitor and train the AI systems ("human-in-the-loop"). We are talking about "augmented intelligence" – the expansion of human intelligence through machine capacity.

Psychology of Security: Social Engineering

Interestingly, perfected technology makes people the most attractive target. If the firewall is insurmountable, you just hack the person. Social engineering becomes more dangerous through AI (as mentioned, through deepfakes). Therefore, employee training must also benefit. Instead of boring standard training courses, AI systems can create simulated phishing campaigns tailored to the individual employee. Anyone who clicks on links frequently receives specific training on this. This increases the "human firewall" more effectively than blanket measures.

Ethical Considerations and "Adversarial AI"

We must also face the risks. Attackers can try to fool the AI systems ("Adversarial Attacks"). By minimally manipulating data (e.g., pixels in an image or bytes in a file), an AI can be made to classify malware as harmless ("Poisoning Attack"). It's a constant game of cat and mouse in which the AI models must be made robust against such attacks. In addition, privacy issues must be clarified if AI systems monitor employee behavior so closely. Transparency (Explainable AI) and ethics are of central importance here.

Chapter 6: The Regulatory Framework (NIS2, DORA, GDPR)

NIS2 Directive

Requires "state of the art". Ignoring AI defense today is often considered negligent.

DORA (Finance)

Focus on digital resilience. Systems must isolate attacks and maintain operations.

GDPR

Minimize liability risks through proactive protection of personal data.

The legislator has read the signs of the times and responded with an unprecedented wave of regulation. Above all is the EU's NIS2 Directive (Network and Information Security Directive 2), which must be implemented into national law by October 2024.

Chapter 7: Strategic Guide for Companies

Inventory

Identify your "crown jewels" and assets. An expert audit is the foundation.

Hybrid Approach

Prioritize email (main entry point) and endpoints (EDR) for AI integration.

Data Quality

Ensure structured logs. Central collection (SIEM) is the cornerstone.

Managed Services

Leverage external expertise (MSSP) to compensate for the skills shortage.

Regular Testing

Validate your AI defense through professional penetration testing.

"IT security is not a state, but a process. AI is the turbo for this process, but the driver still has to be a human with a strategy." – CTO, Pragma-Code.

Chapter 8: Looking into the Crystal Ball – IT Security 2030

Looking even further into the future, we see a world of "Autonomous Security." Systems will patch themselves, configure themselves, and defend themselves without human intervention. We will see networks that behave organically, absorb attacks, and adapt like a biological immune system. Software will be "Secure by Design," written by AI assistants that do not allow insecure code.

But the threats will also evolve. We will see swarms of AI bots launching coordinated attacks on infrastructure. The use of quantum computers on the attacker's side could make today's encryption obsolete ("Y2Q" problem). Therefore, "crypto agility" – the ability to quickly swap encryption algorithms for quantum-safe algorithms – is an important future topic that is already being researched today.

Virtual reality and the metaverse will offer new attack surfaces (biometric hijacking, virtual identity theft). And finally, the interface between humans and machines (brain-computer interfaces) will become the ultimate frontier of IT security. It remains an eternal race, but with AI we at least have a chance of not being left behind.

Conclusion: Act Before It's Too Late

The message of this article is clear and unequivocal: AI in IT security is no longer a "nice-to-have" luxury, but a hard necessity. The threats are too fast, too complex, too numerous, and too intelligent for purely human or rule-based defense. Anyone who still relies on the security strategies of 2020 today is acting negligently and risking the survival of their company.

This is not about inciting fear but exercising realism. The tools are there. They are powerful and now affordable for medium-sized businesses – especially through managed service models. The introduction of AI security is an investment in the survivability of your company.

At Pragma-Code, we deeply understand these challenges. We specialize in state-of-the-art IT security solutions that use AI and machine learning to proactively protect your business. We analyze your architecture, implement the right systems, and monitor them around the clock. Don't wait for the first ransomware screen on your monitor. Take your security into your own hands – with the power of Artificial Intelligence by your side.

Glossary: Important Terms in AI Security

To navigate the world of modern cybersecurity, it's important to understand the terminology. Here is a detailed glossary of the key terms used in this article and in the industry.

Advanced Persistent Threat (APT)

An advanced, sustained attack in which an unauthorized user gains access to a network and stays there undetected for a prolonged period. The goal is usually data theft rather than immediate destruction. AI tools help APTs blend in better.

Adversarial Machine Learning

A technique where attackers attempt to fool machine learning models by providing manipulated input data. This is an attempt to confuse the AI's "senses."

Behavioral Analytics

The use of data analysis to recognize patterns in the behavior of users or entities. Deviations from the norm often indicate security incidents. This is the core of many modern AI security solutions.

Botnet

A network of private computers infected with malware and controlled remotely by criminals without the owners' knowledge. Botnets are often used for DDoS attacks or sending spam.

CISO (Chief Information Security Officer)

The senior executive in a company responsible for information security. They bear the strategic responsibility for protecting corporate data.

Deepfake

Synthetic media generated using artificial intelligence, where one person in an existing image or video is replaced with the likeness of another person. Often used in security for fraud (CEO fraud).

DDoS (Distributed Denial of Service)

An attack that overwhelms a server or network with a flood of requests so it is no longer accessible to legitimate users. AI can help to intelligently manage or repel these attacks.

Endpoint Detection and Response (EDR)

Security technology that monitors endpoint devices (computers, smartphones) to detect and respond to cyber threats. EDR goes beyond pure antivirus software.

Exploit

A piece of software, a chunk of data, or a sequence of commands that takes advantage of a security vulnerability (bug) in an application or system to force unintended behavior.

Honeypot

A decoy system intentionally configured insecurely to attract attackers. The goal is to study their methods and distract them from the real network.

Intrusion Detection System (IDS)

Devices or software applications that monitor a network or systems for malicious activity (IDS) and, if necessary, actively block them (IPS).

Malware

A portmanteau for "malicious software". This includes viruses, worms, trojans, ransomware, spyware, and adware.

Phishing

The attempt to obtain personal data from an internet user via fake websites, emails, or short messages. Spear phishing is the targeted variant against specific individuals.

Ransomware

Malicious software that blocks access to a computer system or its data or encrypts it, demanding a ransom from the victim for the release.

Security Operations Center (SOC)

A central unit in a company responsible for all security issues at organizational and technical levels. All threads and alarms converge here.

Zero-Day Exploit

An attack that exploits a security vulnerability that is not yet known to the software vendor (they had "zero days" to fix it).

Zero Trust

A security concept that assumes no user or device is trusted by default, even if they are inside the corporate network. "Never trust, always verify."

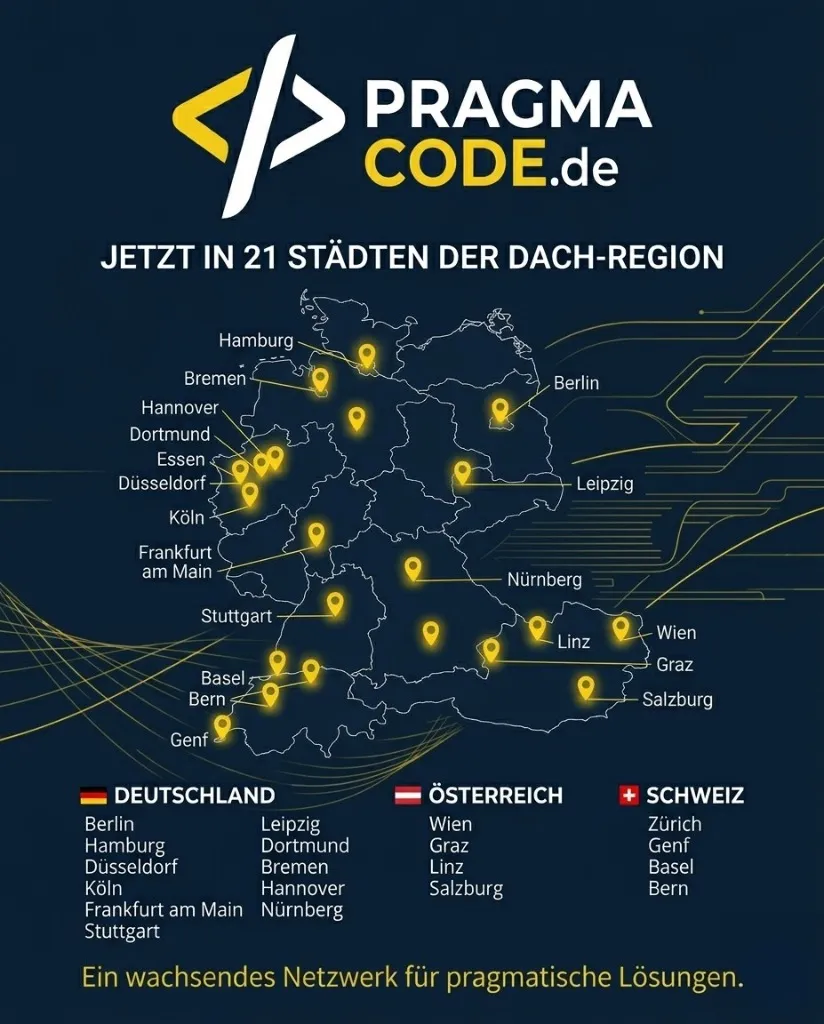

Our Regional Expertise

We are your digital partner – regionally anchored and successfully scaling across borders.

Is your company ready for the future of security?

Let's find your vulnerabilities together before attackers do. Contact us today for a non-binding security audit.

Schedule Free Initial ConsultationOr contact us directly for individual advice: [email protected]