The European Union has set a global milestone with the **AI Act (AI Regulation)**. It is the world’s first comprehensive law for regulating Artificial Intelligence, carrying far-reaching implications for businesses of all sizes. Often referred to as the "GDPR for AI," the regulation aims to ensure that AI systems deployed within the EU are safe, ethical, and in alignment with fundamental rights.

For Small and Medium-sized Enterprises (SMEs), this represents a turning point. While the last few years were marked by experimentation and the rapid adoption of tools like ChatGPT, we are now entering a phase of professionalization and regulatory accountability. Starting in 2026, most provisions will become binding. Those who fail to prepare in time risk not only astronomical fines but also exclusion from the supply chains of large corporations, which are already auditing their suppliers for AI Act compliance.

Introduction: Why the AI Act is Redefining the Digital Economy

Artificial Intelligence is no longer a distant futuristic concept. It is integrated into our daily work—from automated invoice processing to AI-powered customer communication. However, with the power of algorithms come risks: discrimination through biased data, a lack of transparency in decision-making, or the danger of manipulation.

The AI Act follows a **risk-based approach**. This means: the higher the risk an AI system poses to society, the stricter the rules. While a simple spam filter remains largely unregulated, AI systems in HR or critical infrastructure are subject to massive requirements.

"The AI Act is not an innovation killer; it is a trust anchor. In a world where AI content and decisions are ubiquitous, certified safety becomes the most important competitive factor for 'Made in Europe'."

The Roadmap through 2027: Key Deadlines at a Glance

Companies have no time to lose. Implementation occurs in several stages, with the first prohibitions taking effect shortly after entry into force.

All AI practices classified as "unacceptable" are prohibited from this date. These include social scoring, real-time remote biometric identification (with exceptions), and manipulative AI. Furthermore, companies must be able to demonstrate **AI Literacy** (Art. 4) from this point—meaning employees must be trained.

Rules for foundation models like GPT-5.5 or Llama 3 become effective. Providers must submit technical documentation and comply with copyright requirements. Models with "systemic risks" (high computing power) must perform additional evaluations and incident reporting.

The majority of regulations for **High-Risk systems** (Annex III) become binding. This includes applications in education, human resources, or creditworthiness assessment. SMEs must have implemented their compliance management systems by this date.

AI systems built as safety components into products (e.g., medical technology, elevators, toys) must now demonstrate full conformity according to the applicable sector rules and the AI Act.

The Core: The 4 Risk Categories in Detail

To understand which obligations apply to you, you must classify your AI applications. The AI Act distinguishes four levels:

1. Unacceptable Risk

These systems are **completely banned** in the EU. This includes AI for behavioral manipulation, social scoring by government agencies, and real-time biometric surveillance in public spaces. Duty: Immediate deactivation of such systems by February 2025.

2. High-Risk Systems

This is the area with the most extensive requirements. It covers AI in sensitive sectors such as HR (recruiting), banking (credit scoring), justice, and critical infrastructure. Requirements: Risk management, data governance, documentation, human oversight.

3. Limited Risk / Transparency

This includes classic chatbots, emotion recognition systems, or Generative AI (images, text). The main duty here is **transparency**: users must know they are interacting with a machine or that content is AI-generated (watermarking).

4. Minimal Risk

The majority of AI used today (spam filters, video games, simple optimization tools) falls under this category. No specific legal duties apply to these systems, though voluntary codes of conduct are encouraged to promote quality.

Deep Dive: High-Risk Sectors under Annex III

Many SMEs underestimate how quickly they can fall into the "high-risk trap." Here are the most critical areas for businesses:

Software that filters applications, evaluates candidates, or decides on promotions is almost always high-risk.

AI systems used to assess students or to assign them to educational programs are strictly regulated.

Determining creditworthiness (credit scoring) or evaluating insurance applications falls under high-risk Annex III.

AI used in managing water, gas, electricity, or transport systems where failure could pose a risk to life or safety.

General Purpose AI (GPAI): What SMEs Need to Know

GPAI models are AI models that can be used for a wide range of tasks (e.g., foundation models). The AI Act distinguishes between standard models and those with **systemic risks**.

Models trained with a computing power of more than 10^25 FLOPs (like GPT-5.5) are subject to stricter rules. Providers must not only deliver technical documentation and copyright summaries but also perform adversarial testing and report security incidents to the EU AI Office.

The 6 Pillars of Compliance for High-Risk AI

If your project is classified as high-risk, you must implement the following technical and organizational measures (TOMs):

A continuous process throughout the entire AI lifecycle to identify, evaluate, and mitigate known and foreseeable risks through technical measures.

Training data must be relevant, representative, and as error-free as possible. Particular focus is on the detection and correction of prejudices (bias mitigation) to prevent discrimination.

Detailed records proving to authorities how the system works, which architecture it uses, and how conformity is ensured. This must be finalized before the system is placed on the market.

The AI must be technically capable of automatically recording events (logs). This enables the traceability of decisions in the event of errors or discrimination.

Users must receive clear instructions for use, informing them of capabilities, limitations, and necessary human oversight. The human user must be able to understand the results.

AI systems must not autonomously decide over outcomes. "Human-in-the-loop" control must always be possible to stop or ignore decisions.

Special Support for SMEs: The "Sandboxes"

The EU AI Act recognizes that SMEs have fewer resources than Big Tech giants. Therefore, special protection mechanisms have been built in:

Member states must create controlled environments where SMEs can test their AI solutions under supervision—with facilitations in documentation.

Conformity assessment bodies must make their fees proportional for SMEs to lower the barrier for market entry.

The EU Commission provides standardized and simplified forms for technical documentation to ease the administrative burden.

Liability, Fines, and Personal Responsibility

The sanctions for non-compliance are drastic and partially exceed those of the GDPR:

or 7% of total global annual turnover (whichever is higher) for prohibited AI practices.

or 3% of annual turnover for violations of governance, data, or technical documentation rules.

or 1.5% of annual turnover for providing misleading information to authorities.

Crucial for SMEs: the higher amount always applies. Management is also liable for overseeing the compliance measures.

Step-by-Step: Getting "AI Act Ready"

We recommend the following 5-phase model for medium-sized businesses:

Inventory

List all AI systems currently in use or development. Don't forget "shadow IT" (employee private accounts for AI tools).

Classification

Assign each system to its respective risk class. Seek legal advice for borderline cases under Annex III.

Assessment

Perform a gap audit for high-risk systems. Check data quality, transparency requirements, and system robustness.

Training

Implement the AI Literacy duty (Art. 4). Ensure your team understands both how to use AI and its legal boundaries.

Documentation

Start building your compliance file early. Use frameworks like ISO 42001 to structure your documentation effort.

Pragma-Code: Your Partner for Regulatory Excellence

The technical implementation of the AI Act requires a deep understanding of data architectures and regulatory requirements. Pragma-Code supports you with:

Compliance Audit

We audit your AI systems for full conformity with Articles 9 to 15 of the EU AI Act.

Infrastructure Hardening

Implementation of industrial-grade logging and monitoring for AI safety and auditability.

Bias Analytics

Advanced statistical analysis of training data to detect and mitigate discriminatory outcomes.

Conclusion: Certified AI as a Hallmark of Quality

The EU AI Act might seem like a bureaucratic burden, but those who embrace it early win. "Safe AI made in Europe" is becoming the globally recognized standard for quality and data protection. SMEs that invest now create a solid foundation for sustainable growth in the era of Artificial Intelligence.

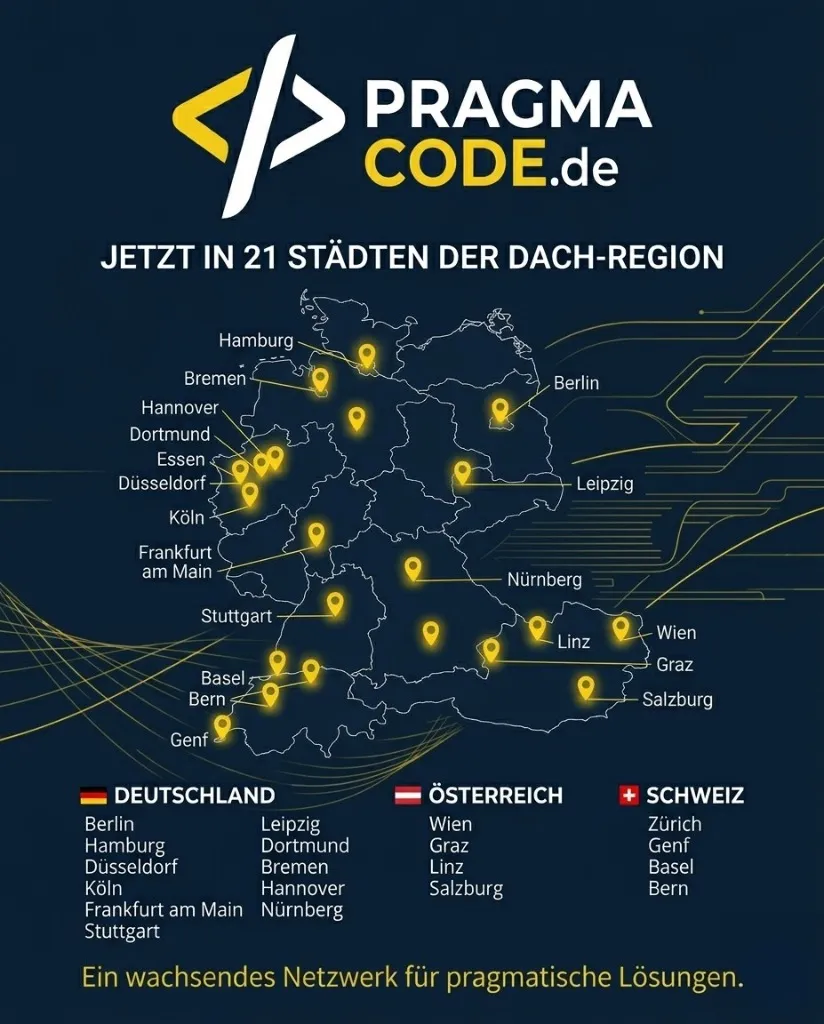

Our Regional Expertise

We are your digital partner – regionally anchored and successfully scaling across borders.

Questions about AI Act Compliance?

Pragma-Code safely guides you through the jungle of the new AI regulation. Let us audit your systems.

Schedule a Free ConsultationFrequently Asked Questions (FAQ)

Does the AI Act apply to US software?

Yes, as soon as an AI service is offered or used in the EU, it must be compliant, regardless of the developer's headquarters.

Do I need to report every small AI tool?

No, the notification duty in the EU database applies primarily to high-risk systems and GPAI models with systemic risks.

What is the difference from GDPR?

GDPR protects personal data. The AI Act protects safety and fundamental rights from the risks of algorithmic decisions. They complement each other.

How do I recognize bias in my data?

Through statistical testing methods that measure whether certain groups (e.g., by age, gender, or origin) are disadvantaged by the system.

Is there funding for SMEs?

Yes, through programs like "Digital Europe," the EU provides funds for the implementation of trustworthy AI.