The digital landscape in 2026 is undergoing its most profound transformation since the invention of the search engine: Generative Engine Optimization (GEO) has definitively replaced traditional Search Engine Optimization (SEO). As ChatGPT, Perplexity, Google's AI Overviews, and autonomous AI agents take over the information monopoly, a traditional search strategy relying purely on keywords and backlinks is losing its efficacy. In its place emerges a paradigm that has always lingered at the core of Google's algorithms but has now ascended to the ultimate benchmark: E-E-A-T (Experience, Expertise, Authoritativeness, Trustworthiness).

When Large Language Models (LLMs) aggregate the combined knowledge of humanity, what matters are not pieced-together texts from content farms, but authentic experiences, deep domain expertise, and an impeccable digital reputation. AI search engines are trained to avoid hallucinations and present users with the most reliable sources. They don't look for text length; they search for truth and credibility. In this comprehensive guide, we will show you how mid-sized and large enterprises can not only survive but stand out as undisputed industry experts in the AI era through the targeted building of Topical Authority, strategic use of Schema Markup 2.0, and the establishment of visible author profiles.

1. What is E-E-A-T and Why AI Models are Addicted to It

E-E-A-T is not a direct technical ranking factor, but a framework from Google's Quality Rater Guidelines used by human evaluators to assess the quality of search results. These assessments are used to train machine learning algorithms (like RankBrain, BERT, and newer LLMs). Specifically, E-E-A-T stands for:

Experience

First-hand observation. Has the author actually used the product? Was the problem solved practically? Pure theoretical knowledge is no longer sufficient in times of omniscient AIs. Original case studies are the key here.

Expertise

Deep, often formal knowledge about a subject area. A tax advisor has expertise in tax issues; a doctor in medicine. It's about demonstrable qualifications, certificates, and years of industry knowledge.

Authoritativeness

How the website and author are perceived by others. Is the site cited by other experts? Are you the go-to resource in your niche (Topical Authority)? This is often shown through mentions in trade media.

Trustworthiness

The most important component at the center of it all. Is the information accurate? Is the site secure (HTTPS)? Are contact details and legal notices transparently communicated? Trust is the currency of the AI era.

Why AI Agents Prefer E-E-A-T Signals

Generative AIs like Perplexity or Google AI Overviews summarize information. Their biggest risk is spreading misinformation ("hallucinations"), which destroys user trust in the platform. Therefore, LLMs in Retrieval-Augmented Generation (RAG) architectures use strong filtering mechanisms to evaluate sources before citing them. An AI would rather pull from a highly structured page written by a verified expert on a domain with high niche authority than a generic wiki article.

"AI agents need anchors in the real world to separate fact from fiction. E-E-A-T is exactly that anchor. Anyone publishing content today without a recognizable human expert footprint will simply be ignored by LLMs." – Alexander Ohl, Pragma-Code

2. Topical Authority: The Foundation of AI Relevance

In the past, websites with high general Domain Authority could rank for almost any keyword, even if they were not specialized in the topic. Today, dominating a niche requires absolute "Topical Authority." This means a website must cover every conceivable aspect of a topic deeply and semantically link them.

How Do You Build Topical Authority?

Building this requires a radical shift away from writing single, disconnected blog posts toward systematically creating "Content Clusters" and "Entity Hubs."

An AI understands concepts, not just strings of characters. Define the core entities of your business area and comprehensively cover all structurally linked subtopics.

Organize your content into Pillar Pages (core topics) and Cluster Pages (specific detailed questions). Link these hierarchically and with precise context.

LLMs have already read billions of texts. To be classified as "new" and "valuable," you must publish things that don't yet exist on the web: your own surveys, internal data analyses, tool benchmarks, or exclusive interviews.

Topical Authority suffers from outdated or irrelevant content on the domain (Content Decay). Ruthlessly delete or update outdated articles to keep the "Information Retrieval" signal pure.

By establishing yourself as a holistic information source, you signal to Google and AI crawlers: "If a user has a question on this topic, I definitely have the right and detailed answer on this domain."

3. Schema Markup 2.0: The Direct API to the AI Brain

The biggest challenge in AI optimization is ensuring that machine systems extract complex contexts on your website flawlessly. This is where Schema.org JSON-LD comes into play. Schema markup is not a new invention, but in the context of GEO, the way we use it has changed drastically. We call this Schema Markup 2.0 (Agentic SEO).

Why Traditional Schema is No Longer Enough

In the past, a simple "Article" schema was enough to appear in Google News. Today, it's about providing the entire relationship between the entity (your company), the author (expert), and the content (knowledge) to the AI. You are building a "Knowledge Graph" directly in the source code.

Key Schema Types for Maximum E-E-A-T

Defines who is responsible. Contains all contact information, verified social media profiles, the official founding history, awards, and links to independent profiles (Crunchbase, Wikipedia, commercial registers).

The absolute game-changer for E-E-A-T. Link the article's author via the `hasOccupation`, `alumniOf` (University), and `knowsAbout` attributes with specialist areas and other publications. This establishes "Expertise".

In addition to Headline and DatePublished, Schema 2.0 uses `citation` to reference scientific or primary sources supporting the article. The `about` and `mentions` attributes link the article directly with Wikipedia entities.

Agents like ChatGPT specifically look for directly extractable question-answer pairs or step-by-step instructions to generate quick summaries. By explicitly using this markup, you deliver bite-sized answers directly into their database.

By interconnecting these schema types (e.g., BlogPosting has an Author which is a Person, working for the Organization), you create an indestructible semantic web showing the AI: This content comes from an audited and authorized source.

4. Author Profiles: Making the "Experience" in E-E-A-T Visible

One of the most common mistakes in B2B blogs is the "Admin" blogger. When articles are published without a recognizable author, under the alias "Editorial Team", or by a placeholder, the E-E-A-T signal is exactly zero. Why should a user—or an AI—trust a faceless entity for complex topics?

The Checklist for the Perfect Author Profile (Author Box & Author Page)

To prove "Experience" and "Expertise," every content producer must be real and verifiable. A dedicated author page (`yourdomain.com/author/john-doe`) is mandatory. This page must provide:

Clear Identification and Biography

High-resolution, professional portrait photo. A detailed biography describing the author's career path, education, and specific practical experience ('Experience') in the corresponding niche.

Proof of Subject Matter Expertise (Credentials)

Listing of certificates, academic titles, mentions in trade magazines, book publications, or relevant speaker appearances at industry events.

Networking the Digital Footprint

Links to active, professionally relevant LinkedIn profiles, GitHub repositories (for developers), X accounts, or ResearchGate. The AI scans these links to evaluate the author's external authority.

Article Archive

Curated list of all articles published by the author on this website to demonstrate a consistent focus on the subject matter ("Topical Focus").

The Technical Backend: SameAs

Embedding Person schema markup with the `sameAs` attribute, pointing to social media profiles and external mentions of this author, simplifying entity matching for Google.

5. The Measurable Business Impact: ROI of E-E-A-T and GEO

Many decision-makers struggle to approve budgets for "brand building" or "E-E-A-T signals" because they seem less directly measurable than performance marketing. However, data from 2025 and 2026 tells a different story: Users' loss of trust in mass-generated AI sites drives premium traffic specifically toward authentic experts.

Visibility in AI "Overviews"

Google reports that pages with strong E-E-A-T signals are disproportionately cited in AI Overviews.

+45% Click-Through-RatePages linked as sources in generated AI answers experience significantly higher user interaction than traditional blue links.

Conversion Rate for YMYL Topics

"Your Money or Your Life" (Health, Finance, B2B Software, Law) is highly dependent on trust.

+60% Higher Lead QualityB2B companies with transparent author profiles and case studies receive significantly warmer leads and shorten direct sales cycles.

The Cost of Ignorance

Those who continue to rely on mass content without expert review risk failing the "Helpful Content System" update.

-80% Organic TrafficIn recent updates, sites without E-E-A-T verification were radically penalized by algorithms, losing almost all their traffic to authoritative brands.

6. Implementation Roadmap: Becoming the AI Agent's Favorite

Building genuine authority doesn't happen overnight. It's a procedural and strategic shift in corporate communication. With the following roadmap, you apply the right levers in the coming quarters.

-

Step 1: Content Audit & Empowering Experts (Month 1)

Evaluate all existing content. Discard outdated blog posts. Identify Subject Matter Experts (SMEs) within your company. Set up technically sound author pages with bios, headshots, and social links for these individuals.

-

Step 2: Semantic Hub & Entity Strategy (Month 2)

Create a map of your most important topic areas. Consolidate loose blog articles into centralized, comprehensive "Pillar Pages" covering all aspects of a topic, and build a flawless internal linking framework.

-

Step 3: Agentic SEO & Schema 2.0 Integration (Month 3)

Implement dynamic, deeply nested JSON-LD schema. Link Person, Organization, FAQ, and Article schemas. Use `sameAs` links to map your digital footprint and that of your authors across the web.

-

Step 4: Producing First-Party Data & Insight Content (Months 4-6)

Start creating publications only you can create. Use your internal customer data, project experiences, and case studies. Quote experts, have articles peer-reviewed, and integrate real testimonials.

-

Step 5: External Authority Expansion (Ongoing)

Encourage your authors to publish on LinkedIn, participate in podcasts, or attend digital conferences. These "Unlinked Mentions" contextually link your experts with subjects across the web—the ultimate authority signal for AI.

Conclusion: Trust is the Currency of the AI Era

In a time when content can be generated at the push of a button, information is abundant. What is radically scarce is truth, trust, and authentic human experience. Companies that understand they must convince not only humans but highly intelligent information agents will be rewarded with unprecedented visibility.

It is no longer about tricking Google. It is about proving—through excellent, original content, technically perfect markup, and building undeniable expertise—that you are the best, most deeply trusted answer to your customer's problem. At Pragma-Code, we natively integrate these principles of Generative Engine Optimization into every web architecture we build.

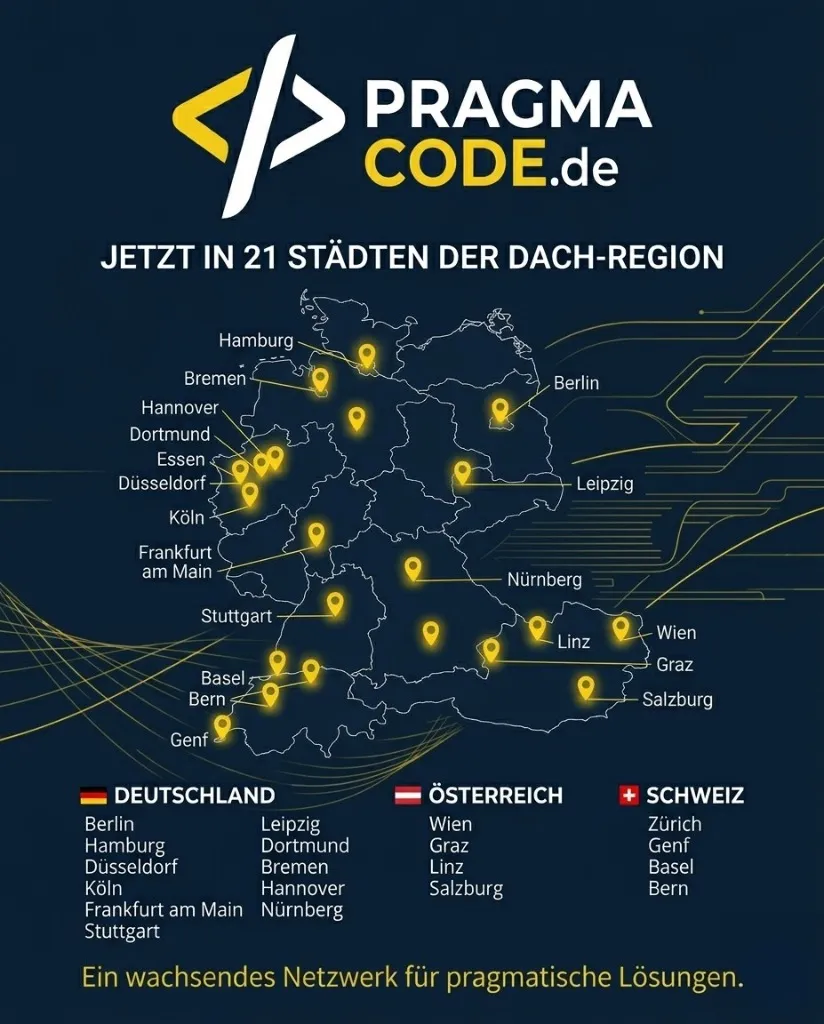

Our Regional Expertise

We are your digital partner – regionally anchored and successfully scaling across borders.

Latest Insights & Articles

SEO in the AI Era

Why traditional SEO is no longer enough in 2026 and how to adapt your strategy for AI Search.

Read More →

GEO: The New Era of SEO

How to optimize your content specifically for Perplexity, ChatGPT and Gemini.

Read More →

Website 2026: Intelligent Ecosystems

Why the technical architecture is becoming the decisive SEO factor.

Read More →Have a vision for your digital presence?

Let's check together how we can make your technological and content authority take flight.

Book your free strategy call nowExtended Specialized Glossary

Generative Engine Optimization (GEO)

The practice of optimizing content specifically for AI-powered search engines and LLMs to be cited as the primary, trustworthy source in generated answers (AI Overviews, chatbots).

Topical Authority

The status of a website or author as a recognized expert in a specific field, achieved through extraordinary content depth, semantic networking, and comprehensive coverage of all facets of that niche topic.

Schema Markup 2.0 (Agentic SEO)

The advanced use of Schema.org (JSON-LD) to create deep, interconnected Knowledge Graphs on websites. Directly links authority, content context, and entities, so autonomous AI agents can read, interpret, and use data flawlessly.

Retrieval-Augmented Generation (RAG)

An AI architecture where the base AI model (LLM) is “fed” with information from external databases (like the live internet) before generating an answer for the user. This is the process behind all modern AI search engines.

Entity Hub

A content structuring method where concepts are organized not by search terms, but by “things” (entities, e.g., “Web Security”), which drastically simplifies machine understanding by AIs.